Zaizi at AWS Summit

We had the pleasure of attending the AWS Summit that took place at the London Excel on July 7th 2016. What a fantastic turnout with over 5000 people ready and eager to learn. We arrived by 9AM and after registering made our way to Excel’s auditorium that was equipped with massive screens and audio systems which felt like we were about to attend a David Guetta concert! (pretty impressive montage).

KEYNOTE – Dr Werner Vogels, CTO, Amazon

CTO of Amazon, Dr. Werner Vogels, delivered the keynote of the day commenting on some of the recent success stories presented by AWS partners. It was impressive to see a CTO admitting how they failed in their first transition from monolithic architecture to services. They focused on data types instead of functionalities, learning from their errors and succeeding with the transition from services to microservices.

A key message of the day was that migrating content to AWS is simple. They have three different methods depending on the amount of data to migrate, from small projects to huge petabytes of data.

What caught our eye were the early signs to identify. If you are at microservices level yet, we summarised them as:

- Different services do coordinated deployments

- A change in one service has unexpected consequences or requires a change in other services

- Services share persistence store (This one and the following one actually represents the fail in the first transition mentioned before)

- You can not change your service’s persistence tier without anyone caring

- Engineers need intimate knowledge of the designs and schemas of other teams’ services

As we listened it was becoming apparent that we have also had or are having similar problems in our projects and that it would be amazing to overcome them. He introduced a new concept of architecture with the AWS lambda functions that can revolutionize how businesses build and design their systems going forward, they called this ‘the serverless architecture’.

We split the day up between us to get the most out of the different sessions. We chose topics which we see as the key areas of interests for our customers right now such as security, scalability & the need for a data-driven architecture. Here’s our thoughts without being too technical (if that is even possible for us!).

Big Data Architectural Patterns And Best Practices On AWS

At the beginning of the day we attended the talk ‘Big data architectural patterns and best practices on AWS‘.

Ian Meyers, a solution architect, talked about the architectural principles, how we should always separate compute and storage, use the right tool for the job; what he called “data sympathy” and using managed services with open technologies.

Interesting to point out was how he went all along the data process (Collect, Store, Process/Analyse and Consume) and how to choose the right architecture for each step. For example, for the data store we will need to consider the data structure (Fixed schema, key-value), access patterns (store data in the format you will access it), data access (how frequently you will access it) and the cost.

Getting Started With AWS Security

As for the rest of areas, it is impressive the number of microservices related to security, covering:

- User and access control (AWS IAM)

- Detective controls (AWS Cloud trail, Amazon Cloud watch)

- Cryptographic services (AWS Key management service, Amazon Cloud HSM)

- Change management (AWS Config, Amazon Config rules)

- …. And others

Before going in detail to the services though it is key to understand key concepts like AWS region or Amazon virtual private cloud. We highly recommend having a look at the free training that AWS provides “Security Fundamentals on AWS”.

Protecting Your Data With Encryption On AWS

If the previous session was a walkthrough of the microservices available on AWS to cover the areas of Security, this one focused in detail on encryption.

Basically, AWS is able to address the 3 questions that we all have faced in the past.

These 3 questions are:

- Where are the keys stored? (Hardware you own?, Hardware the cloud provider owns?)

- Where are the keys used? (Client software you control?, Server software the cloud provider control?)

- Who can use the keys? (Users and applications that have permissions?, Cloud provider applications you give permissions?)

AWS has a wide and flexible set of possibilities to help cover requirements from the previous 3 questions. Amazon KMS is one of the AWS managed services that would make for easier key management. KMS integrates with several other AWS services. A common question when assessing whether to use KMS or not would be; Why should we trust AWS to manage our keys? Well, apart from several KMS features that make it a reliable and secure service, there was a very good answer from the speaker Dave Walker:

“If you don’t trust us, you can trust our auditors”

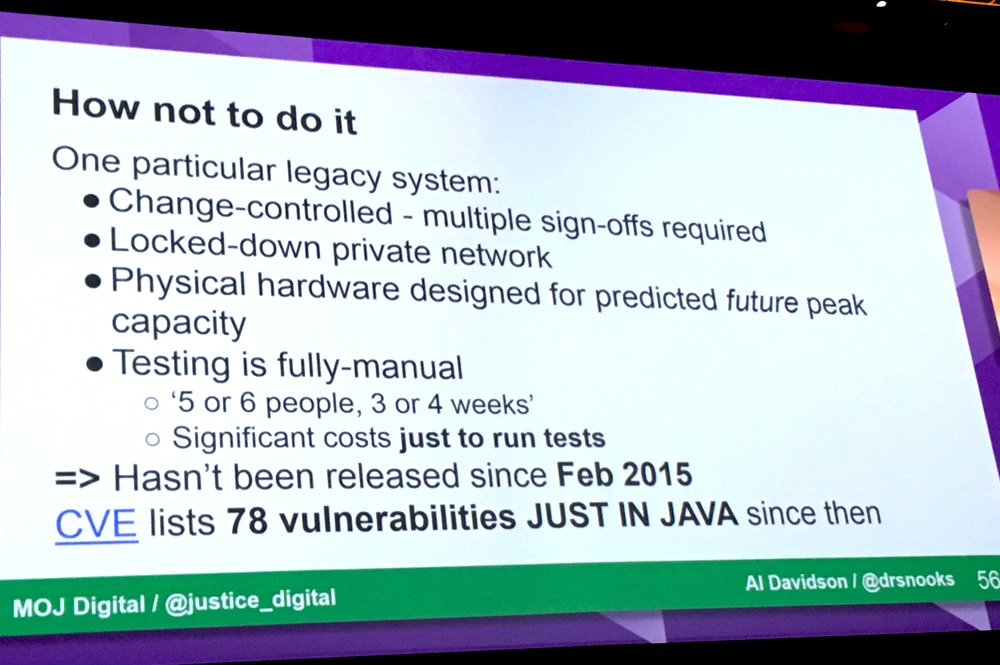

The use case exposed in this talk was particularly interesting, specially for those of us that are involved on UK Government projects. Alistair Davidson, Technical Architecture Lead for Ministry of Justice talked about Government’s Journey to Cloud mentioned several things, but to avoid this blog being too long we are going to highlight two of his slides. The first one is, ‘How not to implement a secure government system in the cloud’:

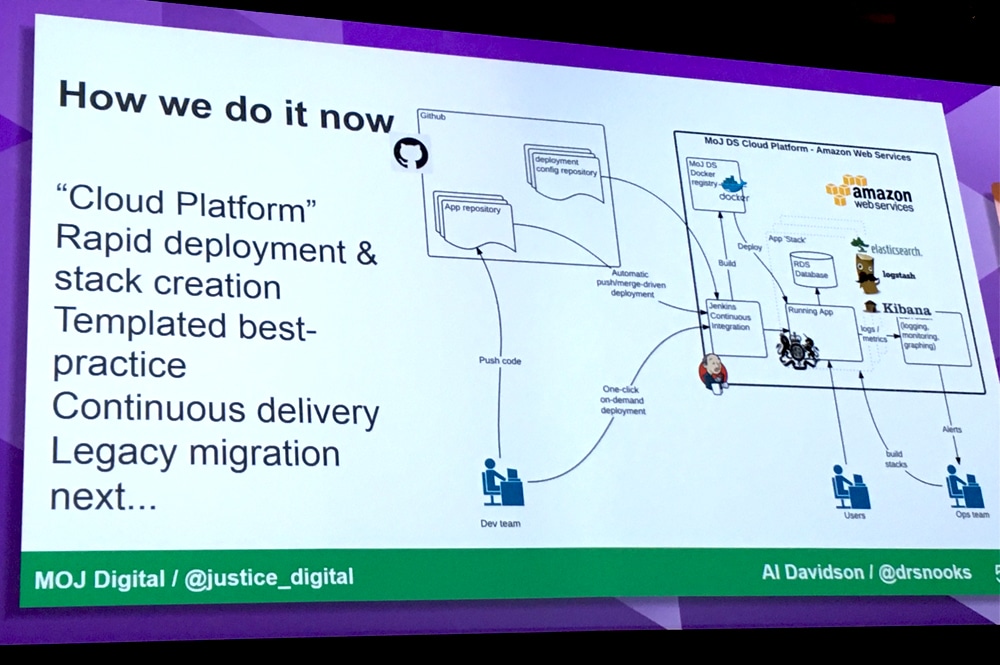

The second slide is about the Way to do it:

We strongly agree with Alistair in the way this should be done. Agility, automation, repeatable and continuous integration are Key terms for Zaizi and so they are for AWS. That is the main reason for Zaizi to bet for AWS and it should be the main reason for Government departments to start moving to AWS, especially knowing that at the end of this 2016 there will be a region in the UK.

Getting Started With AWS Lambda And Serverless Cloud

No offence to the rest of the talks, but AWS Lambda by Dean Bryen (Solutions architect at AWS) was for us the star of the talks. AWS Lambda is the AWS service that provides serverless computing. But what is serverless computing? According to wikipedia:

“In computing, serverless computing is a cloud computing code execution model in which the cloud provider fully manages starting and stopping virtual machines as necessary to serve requests, and requests are billed by an abstract measure of the resources required to satisfy the request, rather than per virtual machine, per hour”

Basically the only thing you have to worry about is writing your own code. Whereas using VMs it is necessary to scale the machines and using containers it is necessary to scale the applications, in serverless computing the unit of scale is the function. In addition you pay per request, no hourly, daily or monthly minimums. You pay 21 microcents per a 100 ms of compute time.

The main benefits of using AWS Lambda can then be summarised as follows:

- No servers to manage

- Continuous scaling

- Never pay for idle or cold servers

How To Scale To Million Of Users With AWS

We always need to consider scalability when we design our systems, so after lunch we went to the talk ‘How to scale to million of users with AWS’.

Ken Payne, a solution architect, talked through how we need to always separate webapp and database tier, use S3 to serve static files, cache data as much as you can at multiple levels, scale the database regarding its use, do you have heavy write? Well, in that case you should consider federation, sharding or even NoSQL. The same way you should use read replica if your database is mainly used to read, and last but not least, take advantage of all the autoscale services!

Question Time With AWS Engineers

As part of the summit, you had the option to ask Amazon architects directly any issues that you could have regarding how to build your solution with AWS, also at the end of the talks you had the opportunity to ask some questions to the presenters.

We were interested in how S3 can be backup and if there was a way to recover the status of the bucket at a point in time, just like you can do it in a database. This is particularly important for us when doing new releases or in disaster recovery scenarios and gets harder when you starting to deal with petabytes of data.

As far as we have been told there is no easy way to do it right now, maybe customising versioning with some lambda code but apparently is not a trivial thing to do.

Final Thoughts

Ana’s opinion:

AWS summit was an amazing experience. Cloud is definitely the way to go, and everyday businesses would have more and more services available to meet their requirements. For now, they can forget about infrastructure and maintenance as AWS would manage everything for you (replication, high availability, backups). Scalability is not a problem anymore as with autoscaling your system will grow or decrease based on the demand and you will only pay for what you are really using.

We all need to rethink our ways of designing systems with new services like lambda functions and new concepts such serverless architecture.

We are, indeed, living in exciting times for IT.

Sergio’s opinion:

AWS summit only confirmed what my experience during the last few years on different projects (especially government) tells me. Cloud is changing the way this world works and specifically the way systems are architectured. This last one puts even more importance on the microservice approach that brings the design and architecture to a different level where connecting things properly is becoming more important than building the things properly. It is very promising to see that AWS is able to address problems that have given me such a headache, extra work hours and extra cost for the company. I am talking about things like:

- High availability

- Disaster recovery

- Load peaks control

- Unexpected infrastructure cost

- …and I could mention many more

Not everything is amazing though, I also have a couple of concerns. The first one is, if AWS carries on building micro services, what will I have to do in the future? I have a mortgage to pay. Will I have to go back to Spain to the countryside and grow tomatoes and cucumbers like my great grandparents once did? Although one could think this is not a very bad idea considering the last news like brexit, realistically I think that our role will still be key but as I said in a different level by connecting microservices in the best way possible.

My other concern and this is a real one, is how to avoid coupling too much to AWS. Sometimes when starting a project one of the common questions/issues to address is how to move the system from the Cloud provider if things go wrong. I guess that here is where our job takes importance.

To end this blog we would like to make reference to one of the slides shown at the Keynotes:

Related content

-

Advancing DevOps practices with AI —lessons from AWS re:Invent

Published on: 10 March, 2025 -

Life after the armed forces: A future in cloud engineering at Zaizi

Published on: 16 June, 2023 -

Alfresco DevCon 2019: A look at Alfresco’s products

Published on: 18 February, 2019 -

Two-factor authentication and facial recognition with Keycloak

Published on: 20 November, 2018